Kamala Harris may well be the next president of the United States, simply because she is not ten million years old. You can trust her to safely operate a motor vehicle — and her campaign to run a livestream.

But her team recently crossed @dril, the legendary online comedian, by distributing one of his posts in an official campaign press release — the one where he jokes: “and another thing: im not mad. please dont put in the newspaper that i got mad.” He really, really, profoundly didn’t like that.

If you’re reading this, you either extremely know who @dril is, or will never quite know who he is. You know him or you don’t, and trying to describe him or summarize him takes some of the magic away. But my mom is gonna read this so I’ll briefly try. Basically, he’s an internet comedian. All his posts are in-character and his fans play along. He’s sort of a parody of angry, arrogant, semi-literate, and vaguely insane middle-aged men from the old days of the internet, like when IMDb had message boards.

Imagine a 53 year old whose kid just taught him how to use the computer. Now imagine him writing the weirdest review of a Clint Eastwood movie you’ve ever seen, or getting on Amazon to holler at length about how his refrigerator was a scam, useless, and made by communists. Posting in the YouTube comments to an REO Speedwagon song about how his divorce is the worst event in modern American history. Permabanned from a model train forum. A little bit Dale Gribble, a little bit Andy Rooney. Has inscrutable, super aggressive opinions about politics but you strongly suspect he doesn’t vote. You’re about halfway there. Let’s call that close enough. Alternatively, you can read his Wikipedia entry, which is about as long and detailed as the Wikipedia entry for a dead president.

Back to Harris. I’m curious about the whole affair. Trying to score internet “cool” points off @dril strikes me as odd. He started doing this act about 15 years ago; it’s not exactly new. I’m no fan of politicians, but I wouldn’t have had the guts to be so hardline in my reaction. @dril posted that the Harris press release was worse than the U.S. supporting the Israeli military and its notoriously abusive detention centers, where soldiers were recently accused of raping a Palestinian detainee.

So I decided to track down @dril for a candid interview.

To get to him, I first have to interview his business manager and bodyguard and “creator” Paul, who happens to be a buddy of mine. He’s a withdrawn, quiet dude in his mid-30s who chooses his words carefully. Very polite. Basically the exact opposite of @dril, who describes himself on Twitter as a “popular and famous Actor and Media Mogul who has cured his own mental illness over 100 times.”

We talk about all the issues that matter: Israel and Palestine, how the Cybertruck is somehow more horrible in person, Bruce Willis’ blues album The Return of Bruno, and how tiring it must be to exist as @dril: It’s a comedic performance that people act almost entitled to. The frustration of crafting something and an audience not treating it like craft and instead as part of the fabric of the internet, something that just is rather than something that is built, must run deep.

After I finish talking to Paul, I’m connected to @dril, with one hardline condition: that the interview be conducted by email. He claims it’s because he has “the covid shits.” That’s fine by me, because @dril is a unique project in that he kinda has to be inside the computer. Bringing him into the L.A. sunlight ruins something about it. This is a character who lives in a fictional world and he’s fundamentally a keyboard warrior. It’d be like taking away George Burns’ cigar.

I ask @dril for his reaction to seeing his words at the top of a press release from somebody Vegas has as the likely winner of the 2024 presidential election.

“Oh, I just about shit,” he says. “It was a total surprise to me, considering people who play any role in a presidential campaign are typically compensated somehow. I know they aren’t hurting for money right now, they must have raised like half a billion dollars up to this point. So, they must hate me, or otherwise wish me dead.”

It reminds me of the fundamental weird thing about Twitter: @dril is worth a lot of money as a concept. He has almost two million followers. Catches the ear of billionaires and presidents. Had an actual feud with Elon Musk. The sort of people who would never ever let you, any of us, through their security gate. But it’s really hard to convert that to dollar one and it sucks.

So I ask him if he thinks presidential campaigns should be punished or humiliated for reappropriating his work like this.

“I think they should be forced to apologize publicly,” writes @dril, “in an unnecessary and embarrassing display of fealty towards me and my posts, as well as compensate me for the full value of the tweet, which I estimate would be about $25.00.”

I tell him the musician, actor, and songwriter Isaac Hayes’ estate is currently suing the Donald Trump campaign for 120,000 times more money than that for playing his song. I ask if he feels kinship with Hayes, another Great Man of History victimized by the fundamental stupidity of American presidential politics.

“I see no similarities between the soulful stylings of the beloved Issac Hayes and the dreck that I put on the computer,” he says. “If anything, I should be paying the new media toilet robber who pilfered my waste product for the free exposure that this incident has provided me. My posts strictly belong in the dumpster. They must never be allowed in any emails, especially not any emails associated with the Resolute Desk. They are Beneath the office.”

But would this situation be any different if the Harris campaign had used a different tweet? Is @dril’s animosity toward his new unexpected visibility related in any way to the post they picked? I mean, no, definitely not, but I want to hear it from him.

He responds: “Well, it’s not the tweet I personally would have picked to send to millions of people to prove some sort of point about human emotion. When I wrote that tweet, I think I had more petty issues in mind. I did not consider that it would be used by a presidential candidate to ‘madshame’ their opponent. And that’s fucked, because I think it’s fine to be mad in certain cases, particularly during an election that everyone says could decide if one billion toddlers get bombed or not. If I genuinely believed that, I suppose I would also get mad, and that’s fine.”

I think of something his TruthPoint cohost and sometimes writing partner Derek Estevez-Olsen said when someone accused @dril of selling the account. “Truth is sadder than that,” Estevez-Olsen wrote. “Original guy killed himself on heroin and motorcycles. Person posting now is his daughter who got disabled in war and VA won’t pay her benefits.” He added that his source was an ongoing lawsuit, and the real @dril owed him $31,000 at time of death.

I ask @dril, for posterity, whether it’s true he sold the account, knowing of course it isn’t true because it doesn’t sound true when you say it out loud.

“How do you feel about these accusations?” After all, it would really piss me off if I’d been doing an act for over a decade and people widely used my material without compensating me and seriously suggested I didn’t even write my own posts.

“There’s no clearer indicator that I’ve ‘made it,’” he responds, “that people would absolutely refuse to believe I’d post something they do not like, to the point they’d rather believe I engaged in some shady marketing deal which seems specifically designed to piss off as many of my followers as possible and make $0.”

He continues: “It’s just something everyone in this business has to deal with. Take Andy Rooney, for instance. When his rants at the end of CBS’ 60 Minutes started becoming more and more deranged, many people with a dangerous, kindergarten-level understanding of the industry accused him of selling out his identity in order to promote another, less talented man who looks exactly like him. I think someone tried to put a bomb in his car. It was an ugly situation.”

So here we are. It’s 2024 and safe to say that @dril has finally hit peak visibility. Weird Twitter’s most famous survivor has, as far as I can tell, gone everywhere there is to go with his current character. He’ll never run out of material, he’s too clever, but there are no more mountains to climb.

“What character?” he says. “Ha ha. Just playing. I think I’ve taken this shit just about as far as it will go. ‘The Poster’ has become a hated and defiled concept. Soon, I will rebrand and pivot into producing inconceivable new forms of media that my followers will find very upsetting. I hope to purge about a million ‘Fake friends’ when I undertake this humiliating venture. Stay tuned.”

I have two last questions. I ask for his reaction to the sheer volume of hotshot writers and pundit types who want a piece of him.

“They are always welcome to threaten to whip my ass over email. However, we all know that they are much too cowardly to do so,” he says.

I ask how he would, in a perfect world, want to be compensated from the outrageous ubiquity of his material. It feels like writing for The Simpsons but somehow not getting royalties. He has like 30 tweets that are all in contention for the funniest post ever made on a computer.

He defines something about the internet. I don’t know what, but his style actually is part of it and will be for a long time.

“I think it’s the greatest honor,” @dril writes back, “to have your art screenshotted and commodified by the most annoying people online and deployed like chess pieces in obnoxious, inconsequential arguments, alongside such elevated content as animated gifs from NBC’s The Office and the funny cartoon frog that supposedly represents disenfranchised young white men. I’m truly happy just to be part of the conversation.”

The Rise of the Digital Oligarchy

On Jan. 11, 1994, I drove to UCLA’s Royce Hall to hear Vice President Al Gore deliver the keynote address at the Information Superhighway Conference. I was in the early stages of building Intertainer, which would become one of the first video-on-demand companies. The 2,000 people crowded into that auditorium did not know it, but they were crossing a threshold. The roster of speakers read like a who’s who of industrial power: TCI’s John Malone, Rupert Murdoch, Sony’s Michael Schulhof, Barry Diller of QVC. These were among the richest and most commanding figures in American communications. Today, their combined force and fortunes are a rounding error beside Elon Musk, Mark Zuckerberg, Peter Thiel, Jensen Huang, Jeff Bezos, and Marc Andreessen. The world the Hollywood moguls walked back out into would not, in any meaningful sense, be the world they had left.

Gore’s UCLA speech now reads like a confident moment in the early‑Clinton fantasia of managed modernization: the assumption that a lightly guided market, properly “incentivized,” could be coaxed into building a new civic commons. He framed the whole project as a public utility constructed with private capital, insisting that “the nation needs private investment to complete the construction of the National Information Infrastructure. And competition is the single most critical means of encouraging that private investment.” What is striking, in retrospect, is not the technophilia but the blithe certainty that “competition” would safeguard pluralism and access, that state‑designed market rules would prevent the emergence of bottlenecks and private tollbooths. The actual trajectory of the internet — toward a stack dominated at each layer by a handful of firms from carriers to platforms to ad brokers — renders the scene almost allegorical: an administration hymning competition as the guarantor of openness while midwifing, in practice, the consolidated, quasi‑monopolistic order that would eventually narrow and privatize the very public sphere it imagined itself to be creating.

For 150 years since the Industrial Revolution, Americans had trusted that science and technology would bind the nation together, just as railroads and the telegraph had once compressed its continental distances. The historian John P. Diggins observed that “whereas the very nature of politics in America implied division and conflict, science was seen as bringing forth cohesion and consensus.” That faith was about to be tested to destruction.

Within two years, Gore and Newt Gingrich collaborated to pass the Telecommunications Act of 1996, and buried inside it was a provision — Section 230 — that would prove more consequential than anything else in the bill. It granted the new platforms a liability shield unavailable to any other business in America: immunity from responsibility for the content their users generated, moderated, or amplified. The effect was to hand the architects of the digital age a license to build without obligation. Welcome to the Wild West; the platforms own the sheriff.

What followed was an era of rapacious accumulation. In 1994, the largest company in America by market capitalization was Exxon, valued at $34 billion. Today, Google is worth $3.7 trillion. And when Donald Trump took the oath of office in January 2025, flanked by the very technocratic elite whose fortunes had grown beyond all precedent, the possibility loomed that the preceding 10 years was crystallizing into a name: techno-fascism — an authoritarian, corporatist order in which a narrow caste of technocratic elites deploys digital infrastructure and artificial intelligence to automate governance, intensify surveillance, and erode democratic accountability, all while presenting their dominion as the neutral application of expertise.

For the past decade I have written about the almost theological divide between two competing creeds. The gospel of nostalgia promises to “make America great again” — its default logic being that the America of the 1950s, when white men’s assumptions went unchallenged by people of color, women, immigrants, or queer individuals, was a more stable and legible world worth recovering. The gospel of progress, as Andreessen has written, holds that “there is no material problem — whether created by nature or by technology — that cannot be solved with more technology.” Its default logic is simpler: stop complaining. Flat wages, rising social media–induced mental illness, falling homeownership, a warming planet — perhaps, but at least we have iPhones. But the philosopher Antonio Gramsci had foreseen this dialectic in 1930: “The old is dying and the new cannot be born. In this interregnum many morbid symptoms appear.”

After the Republican midterm disappointments of 2022, Thiel called for a party that could unite “the priest, the general, and the millionaire”— a formula that reads, with hindsight, as a precise blueprint for Trump’s second administration: Christian nationalism, military force deployed at home and abroad, and a financial oligarchy powerful enough to steer the state. By the election of 2024, the gospel of nostalgia and the gospel of progress had concluded a short-term bargain to elect Trump. The result is the rise of an oligarchy of fewer than 20 American families.

The Copernican Moment

A deep unsettlement runs beneath our society today. Just as Nicolaus Copernicus displaced the Earth from the center of the cosmos, we are now displacing the human from the center of consciousness. New discoveries about cognition in other animals and organisms — octopuses dreaming, bees counting, trees retaining memory of drought — suggest, as Michael Pollan has written, that thought and feeling are not human monopolies but properties of life itself. The first Copernican revolution humbled our astronomy; the second threatens to humble our very being.

Yet the revelation carries its twin anxiety. If mind is no longer our exclusive inheritance, what becomes of that inheritance when machines begin to mimic it? Artificial intelligence poses not merely a technical challenge but a metaphysical one. It asks whether consciousness can exist without vulnerability — without the pulse and jeopardy of a life that can be lost. The Portuguese neuroscientist Antonio Damasio reminds us that the brain evolved to serve the body, that consciousness begins in feeling. Machines, however elaborate, know no hunger, no pain, no desire. To be conscious in the human sense is to participate in necessity — to be held by one’s own fate.

The real danger is not that machines will become like us, but that we will become like them: efficient, unfeeling, exquisitely programmable. A people habituated to passivity and optimized for consumption may eventually forget the work of building a world together. What once belonged to politics — the imaginative labor of collective destiny — has been quietly surrendered to the corporate logic of the algorithm. The result is not enlightenment but enclosure: a society awake to everything except itself.

This interregnum, then, is not a pause but a rupture — a suspended time in which institutions still stand yet no longer persuade, in which the future arrives in forms no one quite intended. What began for my generation as the optimistic dream of a communications revolution has matured into a general condition of American life: a digital oligarchy adrift between orders, armed with enormous power but uncertain whom, or what, it serves. Some of us glimpsed the terrible risk when it was still only a risk — that the principles of kleptocracy would become America’s own. That grim vision is now arriving, in real time, in the person of Trump. As David Frum wrote in The Atlantic, “The brazenness of the self-enrichment now underway resembles nothing from any earlier White House, but rather the corruption of a post-Soviet republic or a postcolonial state.” And the techno-fascist oligarchs are at the trough, waiting to be fed.

The Age of Surveillance and Simulation

The first clear sign that the promise of the digital commons had curdled came with Edward Snowden’s disclosures in 2013, when Americans learned that Google and Facebook had opened their back doors to the security state. What had been marketed as an architecture of connection revealed itself also as an infrastructure of monitoring.

By the mid-2020s, the fear had hardened into habit. A 2025 YouGov survey found that nearly a quarter of Americans admitted to censoring their own posts or messages for fear of being watched or doxxed. Surveillance no longer needed a knock at the door. The mere awareness of a watching eye did the work. What had been a public square had become, almost imperceptibly, a panopticon of self-restraint.

Into this apparatus stepped a new class of private overseers. Palantir, the data-mining firm Thiel co-founded, grew from a counterterrorism instrument into a generalized engine for correlating personal information — tax filings, social media traces, the bureaucratic exhaust of ordinary life. Insiders warned that data citizens had surrendered to the IRS or Social Security for basic governance could be recombined for far more intrusive purposes. The point was not simply that we were being watched, but that we were being rendered legible — sorted, scored, and classified in ways invisible to us. As Anthropic’s CEO Dario Amodei told The New York Times, the Fourth Amendment’s prohibition on unreasonable search and seizure is effectively nullified by AI:

It is not illegal to put cameras around everywhere in public space and record every conversation. It’s a public space — you don’t have a right to privacy in a public space. But today, the government couldn’t record that all and make sense of it. With AI, the ability to transcribe speech, to look through it, correlate it all, you could say: This person is a member of the opposition — and make a map of all 100 million. And so are you going to make a mockery of the Fourth Amendment by the technology finding technical ways around it?

We are witnessing the first serious moral battle of the AI era, and its front lines run straight through the boardrooms of Silicon Valley. Anthropic drew them first. The company refused to allow its systems to be turned on the American public in the name of security and declined to let the Pentagon wire its AI into autonomous weapons capable of identifying and killing without human authorization. To the Defense Department, accustomed to purchasing compliance along with contracts, the idea that a vendor might set moral limits on military use was borderline insubordinate. Secretary of Defense Pete Hegseth designated Anthropic a supply-chain risk to national security. President Trump, on Truth Social, called the company “radical woke” and ordered federal agencies to stop using its technology. Anthropic had been, in effect, blacklisted for conscience.

What happened next revealed something important about the moral landscape of the AI industry. OpenAI, which had publicly positioned itself as sharing Anthropic’s red lines — Sam Altman insisted his company, too, opposed mass domestic surveillance and fully autonomous weapons — moved swiftly to fill the vacuum. While Anthropic was being frozen out of Washington, D.C., OpenAI quietly negotiated and signed a deal of its own with the Pentagon, granting the Defense Department access to its models for deployment in classified environments. OpenAI then published a blog post with a pointed aside: “We don’t know why Anthropic could not reach this deal, and we hope that they and more labs will consider it.” The company that had stood shoulder to shoulder with Anthropic in principle had, in practice, used Anthropic’s exclusion to capture the contract.

The backlash was swift — and came from inside the house. Caitlin Kalinowski, who had led OpenAI’s hardware and robotics teams since late 2024, publicly announced her resignation. Her statement, posted on X and LinkedIn, was brief and precise: “AI has an important role in national security. But surveillance of Americans without judicial oversight and lethal autonomy without human authorization are lines that deserved more deliberation than they got. This was about principle, not people.”

The formulation was careful, almost scrupulously fair to her former colleagues. But the substance was damning. A senior technical executive, one who had spent her career building the physical systems through which AI meets the real world, had concluded that OpenAI had crossed lines it had publicly promised not to cross — and had done so without the internal deliberation those lines deserved. Some users canceled their ChatGPT subscriptions in protest. Claude, Anthropic’s AI assistant, became the number-one free app in the Apple App Store, displacing ChatGPT. The market, in its way, had registered a verdict.

What the episode exposed is the hierarchy of pressures operating on every AI company at this moment. Altman’s public statements and OpenAI’s private negotiations inhabited different moral universes, and the gap between them is a measure of how quickly principle buckles under the combined weight of government contracts, competitive anxiety, and the intoxicating proximity to power. Hegseth and Trump have sent the clearest possible signal: Companies that draw lines will be punished; companies that erase them will be rewarded. The outcome of this first moral battle of the AI era will do much to determine the shape of every battle that follows.

But erasure, in this case, is not incidental — it is the business model. The questions that seem separate — who controls the weapons, who watches the citizens, who owns the culture, whose labor trains the machine — are in fact a single question, asked of us all at once: whether humanity will remain the author of its own story, or be quietly written out of it.

The Technocracy’s Bargain

Artificial intelligence functions in this landscape not only as a tool, but also as an ideology. The systems that now summarize our news, grade our tests, and generate our images are built entirely from accumulated human expression, yet are heralded as replacements for the slow, wayward work of thought. By design they remix rather than originate; they automate style while evacuating risk. The consequence is a flood of synthetic prose and imagery that feels like culture but carries none of the scars of experience. Anyone with a prompt can simulate the surface of artistry, further collapsing the distinction between the crafted and the merely produced.

We need to insist on the human self as something more than a flicker of circuitry or an echo of stimulus — to hold that our consciousness is not reducible to mechanism, that our art, our music, our capacity for beauty and sorrow carry a dignity no machine can counterfeit. We need to imagine a future in which humanity still governs its own creation — not as the object of its inventions, but as their author and their measure. A world that offers consumption in place of purpose courts a different and more corrosive kind of unrest.

The outlines of that unrest were already legible by the middle of the decade. In labor reports and think-tank bulletins one could trace the quiet unmaking of the white-collar world. Young graduates, credentialed and deeply indebted, were discovering that the jobs they had trained for no longer existed in familiar form; whole categories of administrative and creative work were being absorbed by AI or retooled around its efficiencies. Commentators spoke of an “AI job apocalypse” not as metaphor but as demographic fact — an educated stratum slipping downward, its ambitions collapsing into precarity. History offers a warning: When a surplus of the educated meets a scarcity of opportunity, turbulence and unrest follows. The clerks and interns of the knowledge economy can become the dissidents of a new era.

But many of the technocrats already sense what is coming and prefer to prepare their escape. They buy compounds in New Zealand, secure airstrips in remote valleys, fortify estates on distant islands stocked and wired for siege. The gesture betrays everything: They, too, expect the storm. They simply mean to watch it from a safe distance — beyond the reach of the graduates, the strivers, the displaced millions who will inhabit the world their machines made. In that distance — the gap between those who build exits and those who have nowhere to go — the interregnum takes on its most recognizable shape: a society waiting, with gathering impatience and anger, for a new settlement that has yet to arrive.

Sean O’Brien, president of the Teamsters, said something recently about AI and labor that hangs in the air like a change in pressure: For once, those who have never known economic danger are about to feel what it means to be exposed — to live without insulation from the market’s weather. According to The New York Times, “The unemployment rate for college graduates ages 22 to 27 soared to 5.6 percent at the end of last year.”

For 30 years, the country has drifted ever further from the world of things. The old economy of matter — of tools, factories, and physical production — was gradually exchanged for an economy of signs. We learned to believe that the future belonged to those who trafficked in abstractions: the managers of systems, the manipulators of symbols, the custodians of information. That belief became the moral core of the professional class. To think was noble; to make was obsolete.

For decades, the professional class watched the industrial world hollow out and mistook the spectacle for confirmation of its own permanence. It confused exemption with destiny. Now, the correction is arriving — not from the shop floor, but from the circuits.

This is one meaning of the interregnum: a pause in which the old class myths no longer align with material reality, and no new story has yet cohered. In the space between, people who once felt like authors of the future are discovering that they were also characters, written into a script whose logic they did not fully control.

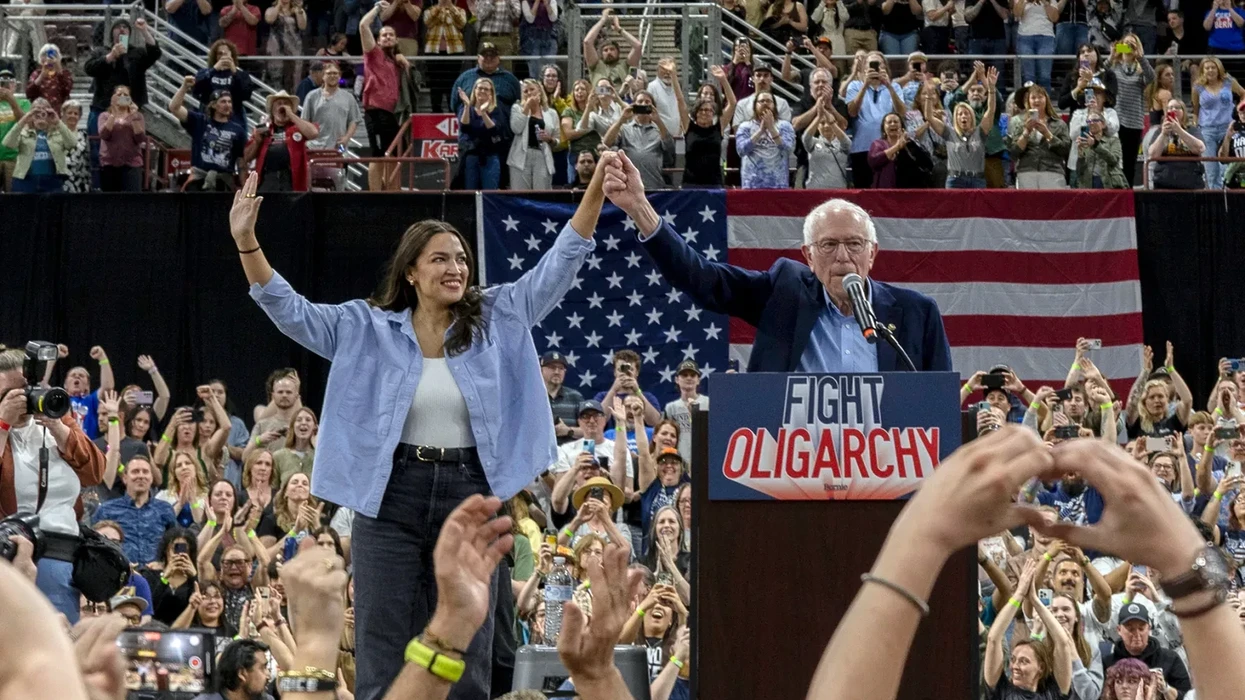

Yet another path exists, if we can summon the imagination to take it. Rather than waging a doomed Luddite resistance, we might seek a grand bargain with the architects of the new order — entering into direct negotiation with Big Tech over the political terms of the transition. The question is not whether AI can be stopped; it cannot. The question is whether its spoils can be shared.

How much of the immense stream of revenue flowing through the platforms and hyperscalers could be redirected toward a sovereign fund, a common dividend for those whose labor has been displaced? Anthropic’s Amodei has suggested a tax of three percent of AI revenues to seed the sovereign fund. It is a moment that calls less for purity than for negotiation — an uneasy but deliberate partnership between humanists and technologists, aimed at keeping a frustrated graduate class from becoming the raw material of a larger revolutionary breakdown.

Marshall McLuhan believed that new media were creating “an overwhelming, destructive maelstrom” into which we were being drawn against our will. But he also believed in a way out. “The absolute indispensability of the artist,” McLuhan wrote, “is that he alone in the encounter with the maelstrom can get the pattern recognition. He alone has the awareness to tell us what the world is made of. The artist is able [to give] … a navigational chart to get out of the maelstrom created by our own ingenuity.”

Our great inquiry now must be: How do we quit the politics of national despair — a maelstrom that our own ingenuity has created? It will be hard, because a vast media industry depends on your engagement with its outrage. Three companies — X, Meta, Google — monopolize the advertising revenue that flows from that outrage. Seventy-eight percent of Americans say these social media companies hold too much power. To break the spell, we need to understand the roots of the phony culture war they have cultivated — and remember that America has had a real promise. Only when we recover that memory can we begin to imagine what the new promise of American life might look like.