ODESA — When the team realized a drone was shadowing them, they immediately understood the danger they faced. They hoped the markings on their vehicles were visible, and that the drone operators could see the team was a group of aid workers, not combatants.

Those hopes evaporated when the drone attacked, dropping munitions that damaged one of the vehicles and severely injured an occupant. The other vehicle stopped, and the aid workers rushed to gather their wounded comrade, hoping to get him to safety. It was a race between unarmed humanitarians and implacable military machines — robotic hunters with lethal weapons directed by high-tech sensors — and losing it meant death.

The aid workers lost the race. Another drone attacked, killing the injured man and another team member. Despite protective armor and helmets, their wounds proved fatal. The remaining four aid workers managed to secure their comrades and fled, all suffering wounds in turn as waves of drones continued chasing them, until they successfully lost their pursuers and hid in a nearby structure.

This story isn’t from Gaza: It took place in Ukraine on Feb. 1, when a drone attack killed two French aid workers — Adrien Baudon de Mony-Pajol, 42, and Guennadi Guermanovitch, 52 — and wounded four more staffers from HEKS/EPER, a Swiss faith-based humanitarian aid group.

“These were some of our best, most experienced people in Ukraine,” says Bernhard Kerschbaum, the head of Global Cooperation at HEKS/EPER. “Their loss is devastating.”

The attack by Israeli military forces that killed seven staff from World Central Kitchen (WCK) in Gaza on Monday followed a similar pattern: a team of aid workers pursued by a drone conducting a methodic and deliberate attack. All seven members of the WCK team were killed as they tried to escape, evacuating the wounded and the slain after successive strikes on three separate vehicles over the course of a mile, along a road designated as “accessible for humanitarian aid.”

The attack, which the Israeli military has called a “mistake,” set off a firestorm — likely due to WCK’s connection to José Andrés, the renowned chef and restaurateur who founded the nonprofit in 2010.

It’s easy to find similar “mistakes” elsewhere: Khartoum, Sudan on Dec. 10, 2023. Kabul, Afghanistan on Aug. 29, 2021. Idlib, Syria on Oct. 15, 2020. Indeed, attacks on aid workers are an increasingly common feature of modern conflicts, due in large part to advances in the lethality of weaponry and tactics used by militaries, but also because of a calculated dismantling of international norms and a lack of accountability for violence against noncombatants.

In 2023, the number of aid workers killed in conflict rocketed up to 260, a 120-percent increase over the previous year, according to researchers at Humanitarian Outcomes, an independent research group based in London. The group maintains the Aid Worker Security Database, which tracks global security incidents involving aid workers. One detail that jumps out in the data is a stunning 2,850-percent increase in the total number of aid workers killed by aerial bombardment in 2023 over the previous year — driven almost entirely by Israel’s brutal war in Gaza.

Last year was by far the most lethal for aid workers since the organization began compiling data — the next highest was in 2013, when 159 aid workers were killed. In the first three months of 2024, 60 aid workers were killed.

“Just by itself, the war in Gaza has seen 203 aid workers killed in six months,” Dr. Abby Stoddard, a partner at Humanitarian Outcomes, tells Rolling Stone. Apart from Gaza, South Sudan, Sudan, and Ukraine were the most dangerous locations in which aid workers operated last year.

In the grim accounting of the costs of war, it may seem morally specious to separate a specific class of noncombatant from the toll of conflicts that kill tens or even hundreds of thousands. Yet what makes attacks on aid workers especially pernicious is that these numbers represent not only the individual lives of the aid workers themselves, but increased danger to an exponentially larger at-risk civilian population suffering from the deleterious effects of war.

After all, the mission of humanitarians isn’t just to stay safe: It’s to deliver food, medicine, water and other aid. All humanitarian organizations must strike a balance between putting staff in harm’s way, and taking necessary risks to gain access to populations caught in the crossfire. Aid agencies are scrambling to adapt and navigate the increasingly perilous landscape for their personnel.

“It’s very difficult, and when they feel they no longer can strike that balance, they pull out,” Stoddard says.

When aid workers are killed, humanitarian organizations generally cease or pause operations. After the attack on its staff in Gaza, World Central Kitchen announced that it was suspending operations in Gaza. HEKS/EPER also suspended frontline activities in Ukraine after the lethal attack in February.

The conflict in Gaza, in addition to creating obvious risks for aid workers operating in an active conflict zone, has been notable for efforts by Israel and its allies to dismantle the organization responsible for providing aid to Palestinians — the United Nations Relief and Works Agency for Palestine Refugees in the Near East, commonly known as UNRWA.

The vast majority of aid workers killed in Gaza have been local employees of UNRWA, often killed while at home or off-duty, but also in the course of their work.

Israel has long criticized UNRWA and other U.N. agencies for an “anti-Israel” bias. After the Oct. 7 terror attack that killed more than 1,200, it directly accused several UNRWA employees of working for Hamas. The U.S. — which is the biggest donor to almost all U.N. agencies, including UNRWA — pulled funding for the agency based on Israel’s accusations. Congress later passed a law blocking any funds from being provided to UNRWA until March 2025.

This effectively dismantled the existing framework for delivering aid in Gaza.

“According to the Geneva Conventions, warring parties have legal responsibilities to facilitate humanitarian access,” Stoddard says. “Gaza is not the first conflict in which International Humanitarian Law has been violated, but it has had a resounding effect on norms. It also shows that the deconfliction system is failing.”

Aid organizations use a variety of methods to inform parties to a conflict about their activities, a process known as deconfliction. One of the most important clearinghouses for deconfliction is the U.N.-operated Humanitarian Notification System (HNS).

In Ukraine, aid organizations can use this system to inform both Ukrainian and Russian authorities about their facilities and the movement of teams conducting humanitarian operations near the frontlines.

In Gaza, aid organizations can also use HNS to inform Palestinian authorities and Israel’s COGAT, or Coordinator of Government Activities in the Territories, to keep the Israeli military apprised of their activities.

In theory, aid teams issue notifications — often including GPS data and timetables — via HNS or other established channels in advance of any movement. In the case of HNS, a notification is sent to Geneva, and from Geneva to coordinating parties on the ground, who inform local commanders in the relevant area about the impending movement of humanitarian aid workers.

So much for theory.

In practice, deconfliction is a messy and uncertain process, providing no guarantees that appropriate military personnel know about an aid team’s movements. In Ukraine, many organizations coordinate directly with local military authorities, as Moscow rarely acknowledges notifications about movements in Ukrainian-controlled territory. In Gaza, aid workers receive little information about who is being notified in which areas.

Over the past two decades, aid workers in several conflict zones have privately relayed to this reporter detailed accounts of coming under fire from belligerents despite issuing advanced notifications about their operations. In cases where there are no injuries or fatalities, there is an incentive to keep these incidents quiet — access to at-risk populations often requires cooperation with the same people shooting at aid workers.

Despite efforts by humanitarian groups to adhere to deconfliction protocols, there are instances of aid workers being killed by almost every armed party in every conflict, which tempts world leaders like Israeli Prime Minister Benjamin Netanyahu to brush such incidents off.

“Unfortunately in the past day there was a tragic event in which our forces unintentionally harmed noncombatants in the Gaza Strip,” Netanyahu said in a video address after the WCK attack. “This happens in war.”

Indeed, it does.

On Oct. 3, 2015, a U.S. Special Forces team working with Afghan government troops called in an airstrike on a hospital in Kunduz. The hospital, operated by Médecins Sans Frontières — also known as MSF or Doctors Without Borders — was a declared humanitarian site, known to U.S. and Afghan authorities.

This did not prevent an AC-130 gunship from bombarding the hospital at the request of the Special Forces team, killing 14 MSF staff, 24 patients and four bystanders in a sustained attack that “repeatedly and precisely hit” the facility, even after MSF staff desperately called government contacts while under fire, notifying Washington and Kabul that their forces were attacking a legally protected humanitarian aid facility.

The U.S. military offered ever-shifting accounts justifying the attack, but Washington eventually admitted culpability for the “mistake,” offering compensation to the victims and their families. The Pentagon conducted an internal investigation, which affirmed the government’s position that the attack was simply an accident. An independent investigation has never been conducted.

That most major militaries have killed aid workers needn’t obfuscate matters: Legally and morally, there is absolute clarity about the obligation to protect humanitarians in war. Less straightforward is why it’s becoming ever harder to keep aid workers safe.

Part of the answer is the numbers reflect the nature of current conflicts. The wars in Gaza and Ukraine, especially, are notable for the use of advanced drones and powerful munitions in built-up urban areas.

“One of our biggest concerns in any conflict is the use of explosive weapons with large payloads, especially those with a large blast radius or radius of lethality, as well as munitions that are delivered by barrage and cover a large area,” says Richard Weir, a senior researcher in the crisis and conflict division of Human Rights Watch. “The problem with these weapons is they create both large-scale immediate effects for civilians in terms of fatalities and injuries, as well as long-term secondary effects, such as damaged critical infrastructure.”

By far, the people most affected by explosive weapons in any conflict are noncombatants, Weir notes, pointing to research that shows “when explosive weapons were used in populated areas, over 90 percent of those killed and injured were civilians.”

“Munitions with wide-area effects increase the risk of disproportionate and indiscriminate harm to civilians,” Weir adds.

The death tolls in Gaza (estimated to be at least 30,000) and Mariupol (estimated to be between 25,000 and 75,000), where air-delivered munitions as large as 2,000-pounds have been used extensively, are testament to that.

In addition to employing rules of engagement which allow for the liberal use of explosive munitions and allow for the prioritization of destroying enemy personnel over protecting noncombatants, both Israel and Russia are also using tactics and technologies that put ever-increasing lethality into the hands of field commanders at lower and lower levels.

Modern militaries are endlessly working to shorten the “kill chain,” a concept that describes the process of identifying, tracking, targeting, and engaging enemy forces in the field. Creating an efficient kill chain increases the odds of destroying enemy units with stand-off or precision weapons, while protecting one’s own forces.

By flooding the battlefield with sensor platforms such as those carried by unmanned drones, military forces can be in a position to call up precision fires as soon as targets appear. This gives an enemy less time to react, but it also gives personnel less time to make decisions.

“There’s no way to predict how commanders will respond to a given situation,” says Samuel Bendett, an expert in drone warfare and unmanned systems at CNA, a federally funded research organization based in Virginia that focuses primarily on defense issues.

Shortened decision-making timelines and permissive rules of engagement increase the chances that non-military targets will be struck. That’s particularly true in conflicts like Gaza, where Israel is hunting Hamas fighters in the midst of civilians and civilian infrastructure. But it’s also true in Ukraine, where static frontlines and contested airspace mean Russia and Ukraine attack targets from extreme distances or with armed drones, often relying purely on electronic sensor data or dated visual intelligence.

“It’s likely that errors can take place as commanders face pressure to respond to tactical changes as quickly as possible,” Bendett says. “Some of this will be due to bad or incomplete intelligence. But some will also be human error, especially when dealing with technology that is becoming more and more sophisticated.”

Questions about targeting practices have become even more urgent in the wake of revelations, first reported by +972 Magazine, that Israel has been relying on an artificial intelligence system called “Lavender” to identify targets for the military.

“[Lavender’s] influence on the military’s operations was such that they essentially treated the outputs of the AI machine ‘as if it were a human decision,’” the report said, adding: “One source stated that human personnel often served only as a ‘rubber stamp’ for the machine’s decisions, adding that, normally, they would personally devote only about ‘20 seconds’ to each target before authorizing a bombing — just to make sure the Lavender-marked target is male.”

Bendett notes that reliance on such technologies will continue to grow: “And as humans will start to rely more and more on automated or AI-driven intelligence assistance, the likelihood of potential errors may also grow.”

There is no indication that the Israeli military used Lavender or automated targeting in the attack on WCK staff. An investigation conducted by an Israeli general cited human “errors.”

“Those who approved the strike were convinced that they were targeting armed Hamas operatives and not WCK employees,” Maj. Gen. Yoav Har-Even said in a statement. “The strike on the aid vehicles is a grave mistake stemming from a serious failure due to a mistaken identification, errors in decision-making, and an attack contrary to the Standard Operating Procedures.”

Multiple sources speaking to a variety of media outlets claimed that the video footage relayed by the drone was low resolution and grainy, and taken at night — a contributing factor to the “mistaken” identification.

Investigations into the February attack on the HEKS/EPER team are ongoing, and Russia has not released any specific information about the incident. But there are some details indicative of the scope of the danger faced by noncombatants, particularly aid workers who must operate near the frontlines.

“The survivors report that they were being hunted,” says Kerschbaum from HEKS/EPER. “It was an incredibly traumatic experience… We took all standard precautionary measures, but we simply weren’t prepared for the kind of environment that exists now.”

In the war in Ukraine, it is common for both sides to operate drones at a relatively high altitude, acting as reconnaissance or data transmission platforms, while coordinating multiple attack drones at lower altitudes. The number of reported drone attacks in frontline areas have increased from around 50 to more than 600 per month recently, according to Ukrainian military authorities.

Some Russian drones are able to track cell phones, and identify the unique International Mobile Equipment Identity of devices and their associated phone numbers — which will also indicate the country of origin of a device’s SIM card.

There are instances in which Ukrainian military units, international media, and aid workers have been targeted based on their cell phone emissions. Russia’s Ministry of Defense has also claimed to have targeted foreign mercenaries on multiple occasions — including a strike in Kharkiv in mid-January in which Russia said it killed dozens of French mercenaries, a claim disputed by Ukraine and France.

While it is unclear if the HEKS/EPER team was tracked because their electronic emissions indicated the presence of French nationals, several major international aid organizations notably issued guidance restricting the use of foreign SIM cards in areas near the line of contact in the wake of the February incident.

Kerschbaum says he does not have sufficient information to know if the team was tracked through its electronic emissions. “But we can not rule out the possibility,” he says.

However it happens, the killing of humanitarian workers has an immediate impact on aid deliveries.

While HEKS/EPER maintains a presence in Ukraine, the organization halted operations near the frontlines — areas where people are most in need of humanitarian assistance. The February incident also had a chilling effect on other organizations, while Ukrainian authorities increased notification requirements and tightened restrictions on the movement of aid workers in Kherson Oblast. Reduced access slows down the delivery of humanitarian assistance.

In Gaza, where WCK suspended operations, more than 1.1 million people face “catastrophic hunger,” warns the World Food Program (WFP). In the northern part of the territory, “famine is imminent,” the organization said on March 18.

The biggest challenge is access.

“WFP and our partners have food supplies ready, at the border and in the region, to feed all 2.2 million people across Gaza — but moving food into and within Gaza is like trying to navigate a maze, with obstacles at every turn,” said Carl Skau, WFP’s deputy executive director.

That the U.S. has now, in the wake of the WCK massacre, successfully pressured Israel to open a border crossing at Erez and use the Port of Ashdod to deliver aid to Gaza is encouraging, but it also demonstrates how unserious previous efforts have been to force Netanyahu’s government to permit access to humanitarian groups.

Humanitarian groups in conflict zones work under challenging conditions, managing risks unimaginable to most people. While politicians talk tough, it takes professional aid workers to determine needs, disentangle logistics, deliver aid, and save lives.

International law aside, that by itself should offer a compelling reason to keep aid workers safe.

The Rise of the Digital Oligarchy

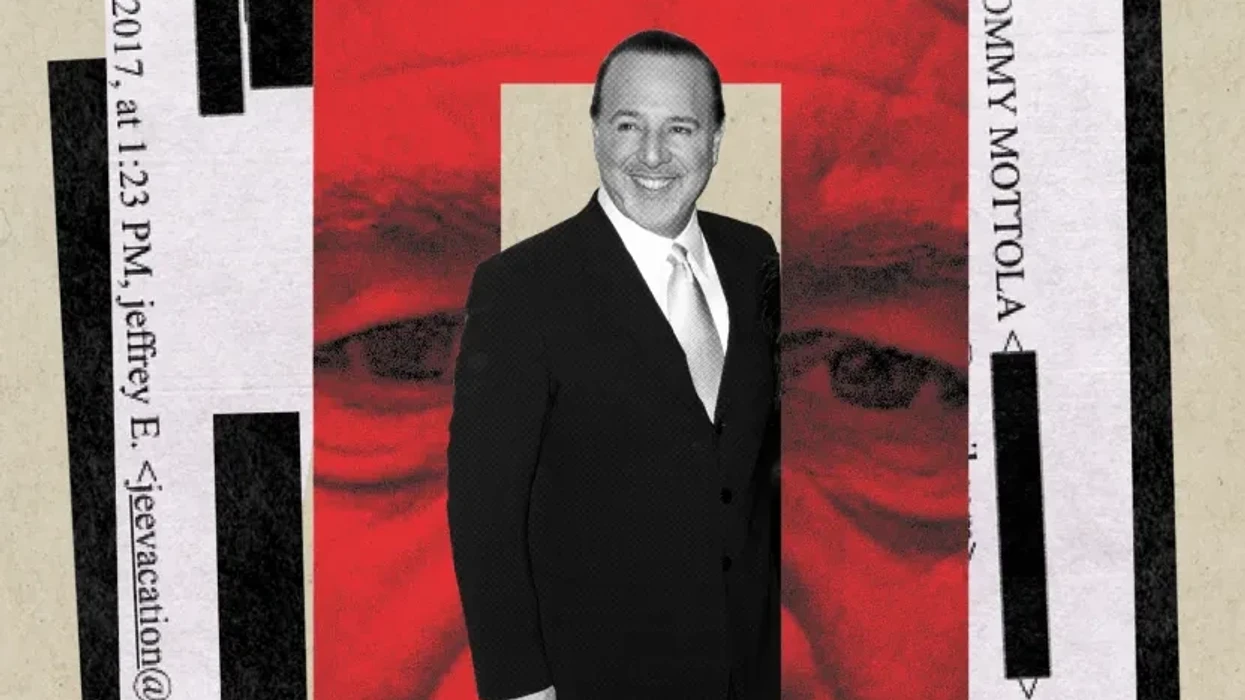

On Jan. 11, 1994, I drove to UCLA’s Royce Hall to hear Vice President Al Gore deliver the keynote address at the Information Superhighway Conference. I was in the early stages of building Intertainer, which would become one of the first video-on-demand companies. The 2,000 people crowded into that auditorium did not know it, but they were crossing a threshold. The roster of speakers read like a who’s who of industrial power: TCI’s John Malone, Rupert Murdoch, Sony’s Michael Schulhof, Barry Diller of QVC. These were among the richest and most commanding figures in American communications. Today, their combined force and fortunes are a rounding error beside Elon Musk, Mark Zuckerberg, Peter Thiel, Jensen Huang, Jeff Bezos, and Marc Andreessen. The world the Hollywood moguls walked back out into would not, in any meaningful sense, be the world they had left.

Gore’s UCLA speech now reads like a confident moment in the early‑Clinton fantasia of managed modernization: the assumption that a lightly guided market, properly “incentivized,” could be coaxed into building a new civic commons. He framed the whole project as a public utility constructed with private capital, insisting that “the nation needs private investment to complete the construction of the National Information Infrastructure. And competition is the single most critical means of encouraging that private investment.” What is striking, in retrospect, is not the technophilia but the blithe certainty that “competition” would safeguard pluralism and access, that state‑designed market rules would prevent the emergence of bottlenecks and private tollbooths. The actual trajectory of the internet — toward a stack dominated at each layer by a handful of firms from carriers to platforms to ad brokers — renders the scene almost allegorical: an administration hymning competition as the guarantor of openness while midwifing, in practice, the consolidated, quasi‑monopolistic order that would eventually narrow and privatize the very public sphere it imagined itself to be creating.

For 150 years since the Industrial Revolution, Americans had trusted that science and technology would bind the nation together, just as railroads and the telegraph had once compressed its continental distances. The historian John P. Diggins observed that “whereas the very nature of politics in America implied division and conflict, science was seen as bringing forth cohesion and consensus.” That faith was about to be tested to destruction.

Within two years, Gore and Newt Gingrich collaborated to pass the Telecommunications Act of 1996, and buried inside it was a provision — Section 230 — that would prove more consequential than anything else in the bill. It granted the new platforms a liability shield unavailable to any other business in America: immunity from responsibility for the content their users generated, moderated, or amplified. The effect was to hand the architects of the digital age a license to build without obligation. Welcome to the Wild West; the platforms own the sheriff.

What followed was an era of rapacious accumulation. In 1994, the largest company in America by market capitalization was Exxon, valued at $34 billion. Today, Google is worth $3.7 trillion. And when Donald Trump took the oath of office in January 2025, flanked by the very technocratic elite whose fortunes had grown beyond all precedent, the possibility loomed that the preceding 10 years was crystallizing into a name: techno-fascism — an authoritarian, corporatist order in which a narrow caste of technocratic elites deploys digital infrastructure and artificial intelligence to automate governance, intensify surveillance, and erode democratic accountability, all while presenting their dominion as the neutral application of expertise.

For the past decade I have written about the almost theological divide between two competing creeds. The gospel of nostalgia promises to “make America great again” — its default logic being that the America of the 1950s, when white men’s assumptions went unchallenged by people of color, women, immigrants, or queer individuals, was a more stable and legible world worth recovering. The gospel of progress, as Andreessen has written, holds that “there is no material problem — whether created by nature or by technology — that cannot be solved with more technology.” Its default logic is simpler: stop complaining. Flat wages, rising social media–induced mental illness, falling homeownership, a warming planet — perhaps, but at least we have iPhones. But the philosopher Antonio Gramsci had foreseen this dialectic in 1930: “The old is dying and the new cannot be born. In this interregnum many morbid symptoms appear.”

After the Republican midterm disappointments of 2022, Thiel called for a party that could unite “the priest, the general, and the millionaire”— a formula that reads, with hindsight, as a precise blueprint for Trump’s second administration: Christian nationalism, military force deployed at home and abroad, and a financial oligarchy powerful enough to steer the state. By the election of 2024, the gospel of nostalgia and the gospel of progress had concluded a short-term bargain to elect Trump. The result is the rise of an oligarchy of fewer than 20 American families.

The Copernican Moment

A deep unsettlement runs beneath our society today. Just as Nicolaus Copernicus displaced the Earth from the center of the cosmos, we are now displacing the human from the center of consciousness. New discoveries about cognition in other animals and organisms — octopuses dreaming, bees counting, trees retaining memory of drought — suggest, as Michael Pollan has written, that thought and feeling are not human monopolies but properties of life itself. The first Copernican revolution humbled our astronomy; the second threatens to humble our very being.

Yet the revelation carries its twin anxiety. If mind is no longer our exclusive inheritance, what becomes of that inheritance when machines begin to mimic it? Artificial intelligence poses not merely a technical challenge but a metaphysical one. It asks whether consciousness can exist without vulnerability — without the pulse and jeopardy of a life that can be lost. The Portuguese neuroscientist Antonio Damasio reminds us that the brain evolved to serve the body, that consciousness begins in feeling. Machines, however elaborate, know no hunger, no pain, no desire. To be conscious in the human sense is to participate in necessity — to be held by one’s own fate.

The real danger is not that machines will become like us, but that we will become like them: efficient, unfeeling, exquisitely programmable. A people habituated to passivity and optimized for consumption may eventually forget the work of building a world together. What once belonged to politics — the imaginative labor of collective destiny — has been quietly surrendered to the corporate logic of the algorithm. The result is not enlightenment but enclosure: a society awake to everything except itself.

This interregnum, then, is not a pause but a rupture — a suspended time in which institutions still stand yet no longer persuade, in which the future arrives in forms no one quite intended. What began for my generation as the optimistic dream of a communications revolution has matured into a general condition of American life: a digital oligarchy adrift between orders, armed with enormous power but uncertain whom, or what, it serves. Some of us glimpsed the terrible risk when it was still only a risk — that the principles of kleptocracy would become America’s own. That grim vision is now arriving, in real time, in the person of Trump. As David Frum wrote in The Atlantic, “The brazenness of the self-enrichment now underway resembles nothing from any earlier White House, but rather the corruption of a post-Soviet republic or a postcolonial state.” And the techno-fascist oligarchs are at the trough, waiting to be fed.

The Age of Surveillance and Simulation

The first clear sign that the promise of the digital commons had curdled came with Edward Snowden’s disclosures in 2013, when Americans learned that Google and Facebook had opened their back doors to the security state. What had been marketed as an architecture of connection revealed itself also as an infrastructure of monitoring.

By the mid-2020s, the fear had hardened into habit. A 2025 YouGov survey found that nearly a quarter of Americans admitted to censoring their own posts or messages for fear of being watched or doxxed. Surveillance no longer needed a knock at the door. The mere awareness of a watching eye did the work. What had been a public square had become, almost imperceptibly, a panopticon of self-restraint.

Into this apparatus stepped a new class of private overseers. Palantir, the data-mining firm Thiel co-founded, grew from a counterterrorism instrument into a generalized engine for correlating personal information — tax filings, social media traces, the bureaucratic exhaust of ordinary life. Insiders warned that data citizens had surrendered to the IRS or Social Security for basic governance could be recombined for far more intrusive purposes. The point was not simply that we were being watched, but that we were being rendered legible — sorted, scored, and classified in ways invisible to us. As Anthropic’s CEO Dario Amodei told The New York Times, the Fourth Amendment’s prohibition on unreasonable search and seizure is effectively nullified by AI:

It is not illegal to put cameras around everywhere in public space and record every conversation. It’s a public space — you don’t have a right to privacy in a public space. But today, the government couldn’t record that all and make sense of it. With AI, the ability to transcribe speech, to look through it, correlate it all, you could say: This person is a member of the opposition — and make a map of all 100 million. And so are you going to make a mockery of the Fourth Amendment by the technology finding technical ways around it?

We are witnessing the first serious moral battle of the AI era, and its front lines run straight through the boardrooms of Silicon Valley. Anthropic drew them first. The company refused to allow its systems to be turned on the American public in the name of security and declined to let the Pentagon wire its AI into autonomous weapons capable of identifying and killing without human authorization. To the Defense Department, accustomed to purchasing compliance along with contracts, the idea that a vendor might set moral limits on military use was borderline insubordinate. Secretary of Defense Pete Hegseth designated Anthropic a supply-chain risk to national security. President Trump, on Truth Social, called the company “radical woke” and ordered federal agencies to stop using its technology. Anthropic had been, in effect, blacklisted for conscience.

What happened next revealed something important about the moral landscape of the AI industry. OpenAI, which had publicly positioned itself as sharing Anthropic’s red lines — Sam Altman insisted his company, too, opposed mass domestic surveillance and fully autonomous weapons — moved swiftly to fill the vacuum. While Anthropic was being frozen out of Washington, D.C., OpenAI quietly negotiated and signed a deal of its own with the Pentagon, granting the Defense Department access to its models for deployment in classified environments. OpenAI then published a blog post with a pointed aside: “We don’t know why Anthropic could not reach this deal, and we hope that they and more labs will consider it.” The company that had stood shoulder to shoulder with Anthropic in principle had, in practice, used Anthropic’s exclusion to capture the contract.

The backlash was swift — and came from inside the house. Caitlin Kalinowski, who had led OpenAI’s hardware and robotics teams since late 2024, publicly announced her resignation. Her statement, posted on X and LinkedIn, was brief and precise: “AI has an important role in national security. But surveillance of Americans without judicial oversight and lethal autonomy without human authorization are lines that deserved more deliberation than they got. This was about principle, not people.”

The formulation was careful, almost scrupulously fair to her former colleagues. But the substance was damning. A senior technical executive, one who had spent her career building the physical systems through which AI meets the real world, had concluded that OpenAI had crossed lines it had publicly promised not to cross — and had done so without the internal deliberation those lines deserved. Some users canceled their ChatGPT subscriptions in protest. Claude, Anthropic’s AI assistant, became the number-one free app in the Apple App Store, displacing ChatGPT. The market, in its way, had registered a verdict.

What the episode exposed is the hierarchy of pressures operating on every AI company at this moment. Altman’s public statements and OpenAI’s private negotiations inhabited different moral universes, and the gap between them is a measure of how quickly principle buckles under the combined weight of government contracts, competitive anxiety, and the intoxicating proximity to power. Hegseth and Trump have sent the clearest possible signal: Companies that draw lines will be punished; companies that erase them will be rewarded. The outcome of this first moral battle of the AI era will do much to determine the shape of every battle that follows.

But erasure, in this case, is not incidental — it is the business model. The questions that seem separate — who controls the weapons, who watches the citizens, who owns the culture, whose labor trains the machine — are in fact a single question, asked of us all at once: whether humanity will remain the author of its own story, or be quietly written out of it.

The Technocracy’s Bargain

Artificial intelligence functions in this landscape not only as a tool, but also as an ideology. The systems that now summarize our news, grade our tests, and generate our images are built entirely from accumulated human expression, yet are heralded as replacements for the slow, wayward work of thought. By design they remix rather than originate; they automate style while evacuating risk. The consequence is a flood of synthetic prose and imagery that feels like culture but carries none of the scars of experience. Anyone with a prompt can simulate the surface of artistry, further collapsing the distinction between the crafted and the merely produced.

We need to insist on the human self as something more than a flicker of circuitry or an echo of stimulus — to hold that our consciousness is not reducible to mechanism, that our art, our music, our capacity for beauty and sorrow carry a dignity no machine can counterfeit. We need to imagine a future in which humanity still governs its own creation — not as the object of its inventions, but as their author and their measure. A world that offers consumption in place of purpose courts a different and more corrosive kind of unrest.

The outlines of that unrest were already legible by the middle of the decade. In labor reports and think-tank bulletins one could trace the quiet unmaking of the white-collar world. Young graduates, credentialed and deeply indebted, were discovering that the jobs they had trained for no longer existed in familiar form; whole categories of administrative and creative work were being absorbed by AI or retooled around its efficiencies. Commentators spoke of an “AI job apocalypse” not as metaphor but as demographic fact — an educated stratum slipping downward, its ambitions collapsing into precarity. History offers a warning: When a surplus of the educated meets a scarcity of opportunity, turbulence and unrest follows. The clerks and interns of the knowledge economy can become the dissidents of a new era.

But many of the technocrats already sense what is coming and prefer to prepare their escape. They buy compounds in New Zealand, secure airstrips in remote valleys, fortify estates on distant islands stocked and wired for siege. The gesture betrays everything: They, too, expect the storm. They simply mean to watch it from a safe distance — beyond the reach of the graduates, the strivers, the displaced millions who will inhabit the world their machines made. In that distance — the gap between those who build exits and those who have nowhere to go — the interregnum takes on its most recognizable shape: a society waiting, with gathering impatience and anger, for a new settlement that has yet to arrive.

Sean O’Brien, president of the Teamsters, said something recently about AI and labor that hangs in the air like a change in pressure: For once, those who have never known economic danger are about to feel what it means to be exposed — to live without insulation from the market’s weather. According to The New York Times, “The unemployment rate for college graduates ages 22 to 27 soared to 5.6 percent at the end of last year.”

For 30 years, the country has drifted ever further from the world of things. The old economy of matter — of tools, factories, and physical production — was gradually exchanged for an economy of signs. We learned to believe that the future belonged to those who trafficked in abstractions: the managers of systems, the manipulators of symbols, the custodians of information. That belief became the moral core of the professional class. To think was noble; to make was obsolete.

For decades, the professional class watched the industrial world hollow out and mistook the spectacle for confirmation of its own permanence. It confused exemption with destiny. Now, the correction is arriving — not from the shop floor, but from the circuits.

This is one meaning of the interregnum: a pause in which the old class myths no longer align with material reality, and no new story has yet cohered. In the space between, people who once felt like authors of the future are discovering that they were also characters, written into a script whose logic they did not fully control.

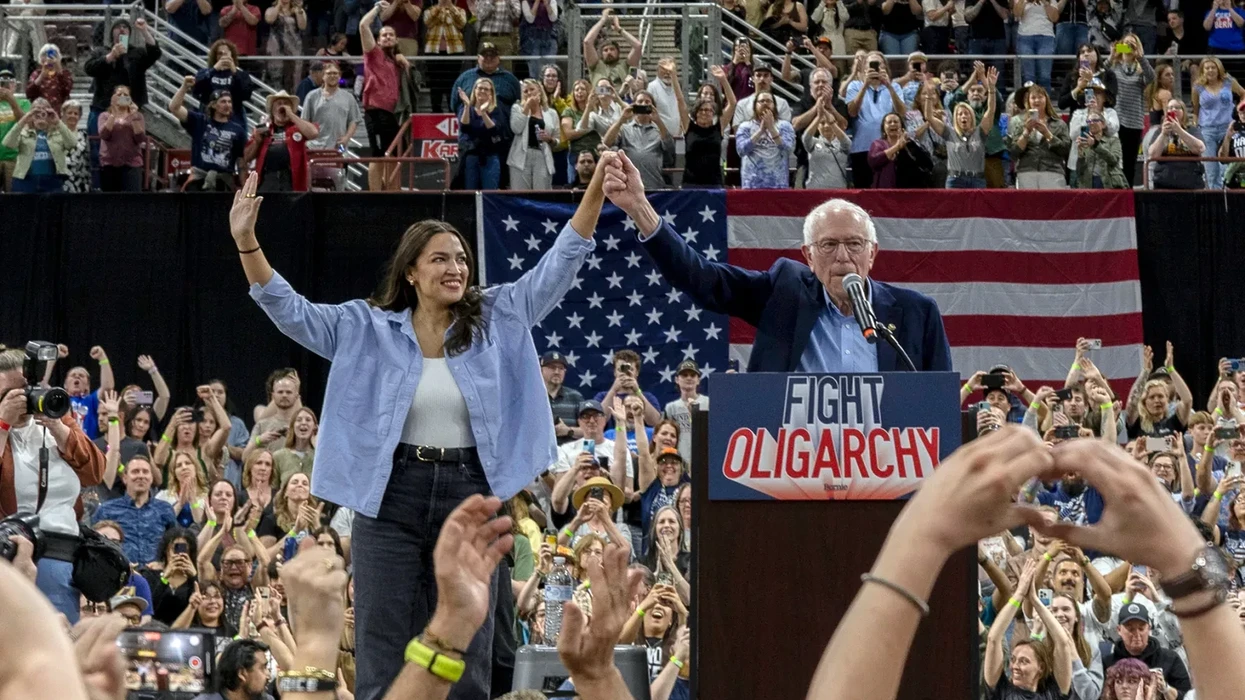

Yet another path exists, if we can summon the imagination to take it. Rather than waging a doomed Luddite resistance, we might seek a grand bargain with the architects of the new order — entering into direct negotiation with Big Tech over the political terms of the transition. The question is not whether AI can be stopped; it cannot. The question is whether its spoils can be shared.

How much of the immense stream of revenue flowing through the platforms and hyperscalers could be redirected toward a sovereign fund, a common dividend for those whose labor has been displaced? Anthropic’s Amodei has suggested a tax of three percent of AI revenues to seed the sovereign fund. It is a moment that calls less for purity than for negotiation — an uneasy but deliberate partnership between humanists and technologists, aimed at keeping a frustrated graduate class from becoming the raw material of a larger revolutionary breakdown.

Marshall McLuhan believed that new media were creating “an overwhelming, destructive maelstrom” into which we were being drawn against our will. But he also believed in a way out. “The absolute indispensability of the artist,” McLuhan wrote, “is that he alone in the encounter with the maelstrom can get the pattern recognition. He alone has the awareness to tell us what the world is made of. The artist is able [to give] … a navigational chart to get out of the maelstrom created by our own ingenuity.”

Our great inquiry now must be: How do we quit the politics of national despair — a maelstrom that our own ingenuity has created? It will be hard, because a vast media industry depends on your engagement with its outrage. Three companies — X, Meta, Google — monopolize the advertising revenue that flows from that outrage. Seventy-eight percent of Americans say these social media companies hold too much power. To break the spell, we need to understand the roots of the phony culture war they have cultivated — and remember that America has had a real promise. Only when we recover that memory can we begin to imagine what the new promise of American life might look like.